1. Introduction

Many tasks in image processing need to manipulate images in a spatially varying manner. For example, the quality of different image regions may vary (e.g. a dark face over a bright background), and during image enhancement, it is critical to locally enhance important regions (e.g. faces) while keeping the rest of the image largely intact. As another example, a user may wish to replace the texture over a certain object in a photo.

To facilitate such spatially varying manipulation, an image editing system should possess the capability of figuring out the structure of an image. The structure refers to salient edges and contours of objects and regions in the image. Such structural information can effectively control image manipulation operations within an appropriate spatial limit. In addition, salient edges and contours are also crucial for artistic rendering based on image abstraction and stylization.

Even though an image has more than hundreds of thousands of pixels, salient edges and contours only comprise of a sparse subset of the pixels. Therefore, an important goal in image processing is identifying sparse salient structures from dense pixels. Related goals aim to remove insignificant details and make the input image piecewise constant. In such an image, pixels having similar transformed values essentially form implicit regions. Such a piecewise constant image can significantly reduce the complexity of subsequent processing algorithms because the regions in the transformed image can be easily discovered by clustering the transformed pixel values.

Albeit the aforementioned benefits, achieving piecewise constant results is challenging, which implies one needs to remove not only high-frequency signals but also low-frequency variations. While high-frequency signals can be removed easily with local filters, such as the bilateral filter, low-frequency variations are more “stubborn” and much harder to remove. As long as there exists small differences between adjacent pixel values after filtering, such small differences can still accumulate and give rise to significant differences between distant pixels in the same region, violating piecewise constancy. Therefore, removing low-frequency variations within image regions requires more global operations.

2. A Sparsity Model for Piecewise Image Flattening

Inspired by the sparsity of salient structures as well as the theory on sparse signal recovery, we propose a new sparsity-based model for piecewise image flattening using L1 regularization to achieve the aforementioned goals [1]. It is well known that regularization based on the L1 norm is capable of promoting sparse solutions. In our context, nonzero entries in a sparse solution correspond to pixel pairs across salient edges and contours. If two neighboring pixels have similar colors, their transformed colors are pulled even closer and become almost identical; if there exists a color discontinuity across two adjacent pixels, their transformed colors are still kept apart. Thus, image transformation based on our new sparsity-based model flattens image regions with internal color variations while preserving salient edges and contours.

Our sparsity-based image transform is cast as an energy minimization, which flattens image regions while still achieving a reasonable approximation of the original image. The energy function of this minimization is a weighted summation of three energy terms, including the local flattening, global sparsity and image approximation terms. The local flattening term accumulates weighted L1 differences between pixel pairs that are within each other’s neighborhoods. Because neighboring pixels with similar colors in the input image are assigned larger weights, the L1 differences of the transformed colors at such pixels are forced to be very small, giving rise to the desired flattening effect. The global sparsity term considers interactions between all pairs of representative pixels in the image, and makes similar representative pixels have identical transformed colors. We further impose a third image approximation term that makes the transformed image have minimal deviation from the input image. With this term, transformed pixel colors cannot collapse to the same value.

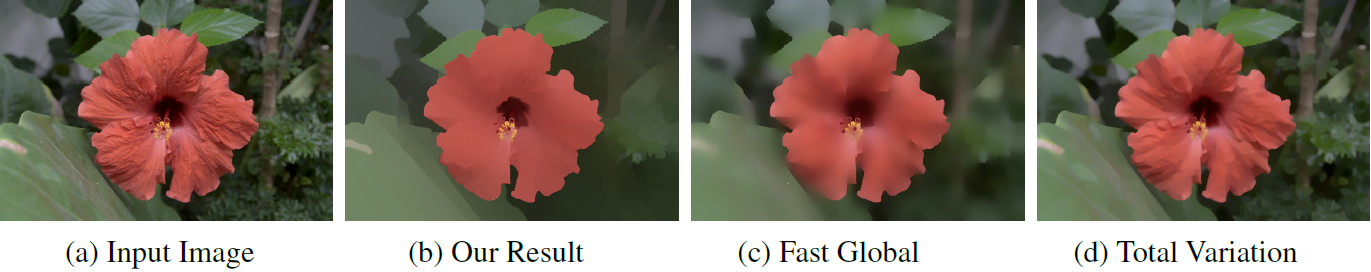

Examples of piecewise image flattening results are shown in Figure 1. A visual comparison between results from our image flattening algorithm and two state-of-the-art edge-aware smoothing algorithms are given in Figure 2. Our sparsity-based model for image flattening has been successfully applied to edge-preserving image smoothing and scene-level intrinsic image decomposition [1].

Figure 1. Piecewise image flattening results.

Figure 2. Comparison between our image flattening result and results from two existing state-of-the-art algorithms: fast global image smoothing and nonlinear total variation based image denoising. Our method thoroughly removes insignificant details while preserving salient contours. Exiting algorithms either blur important features or retain too many details.

3. Piecewise Flat Embedding

We have generalized the above sparsity-based image flattening model to a new nonlinear embedding, called piecewise flat embedding (PFE) [2], which is capable of handling more generic pixel grouping and image segmentation problems.

A common strategy for image segmentation computes an embedding to map pixels to a feature space, where pixels with similar attributes are positioned closer to each other than those with dissimilar attributes. Grouping pixels in this feature space often gives rise to improved segmentation results. Nevertheless, existing methods typically generate smooth embeddings, making it not easy to have clear-cut decisions on object boundaries. Ideally, pixel grouping would be made much easier if an embedding could map original pixels on the same object to a point cloud tightly distributed around a single point in the feature space while points corresponding to pixels on different objects are still kept apart. However, solving such an embedding requires the knowledge of object boundaries in the first place.

Our piecewise flat embedding solves the above dilemma in image segmentation. PFE attempts to identify segment boundaries while suppressing variations within segments. It is based on the theory of sparse signal recovery since segment boundaries consist of a sparse subset of the original pixels. We adopt an L1-regularized energy term in the formulation to promote sparse solutions. A sparse solution in our context implies that there only exists a sparse subset of point pairs whose distances in the feature space are sufficiently large, and the distances among the rest of the point pairs are almost zero. Such an embedding essentially form well-separated clusters, each of which is a point cloud tightly distributed around a single center. We have devised a two-stage numerical algorithm based on Bregman iterations as well as effective initialization schemes to solve the proposed embedding.

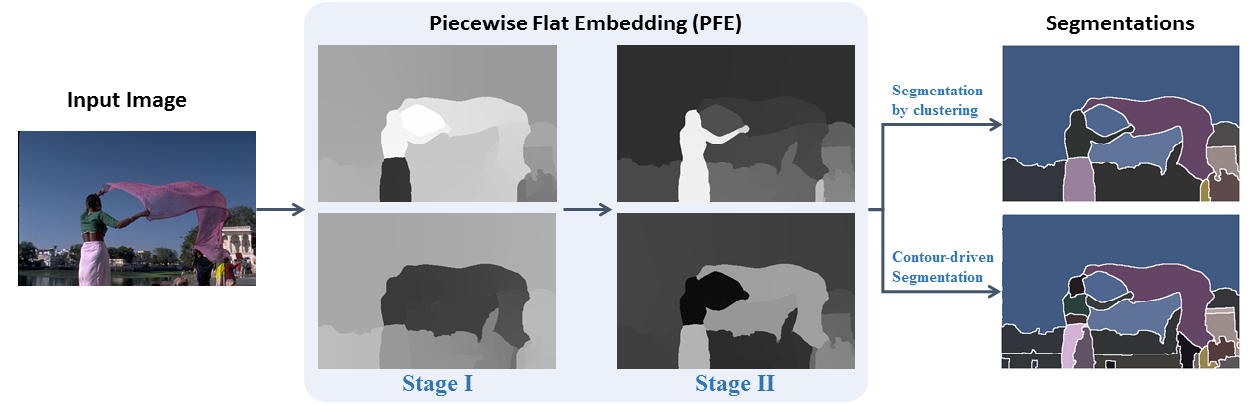

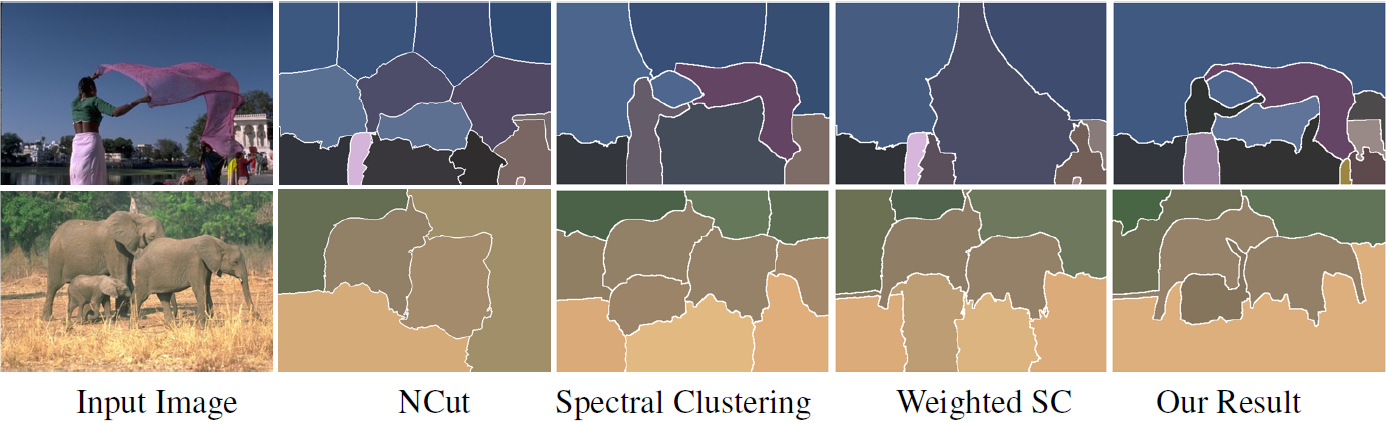

The proposed piecewise flat embedding can be easily integrated into existing image segmentation frameworks, including segmentation based on clustering and hierarchical segmentation based on contour detection. A pipeline for image segmentation based on piecewise flat embedding is shown in Figure 3. Experiments on a popular benchmark (BSDS500) indicate that segmentation algorithms incorporating this embedding can achieve significantly improved results in both frameworks [2]. A visual comparison between our segmentation results using K-means and segmentation results from normalized cuts (NCut), spectral clustering, and weighted spectral clustering is shown in Figure 4.

Figure 3. A pipeline for image segmentation based on piecewise flat embedding.

Figure 4. Comparison between our segmentation results using K-means and segmentation results from normalized cuts (NCut), spectral clustering, and weighted spectral clustering.

References

- S. Bi, X. Han, and Y. Yu. An L1 Image Transform for Edge-Preserving Smoothing and Scene-Level Intrinsic Decomposition. SIGGRAPH 2015, Los Angeles, August 2015 (ACM Transactions on Graphics, Vol 34, No 4, 2015).

- Y. Yu, C. Fang, and Z. Liao. Piecewise Flat Embedding for Image Segmentation. International Conference on Computer Vision (ICCV), Santiago (Chile), December 2015.

Contact

For more information, please contact Prof. Yizhou Yu.